DOD Declares War on Anthropic and Claude

DOD drafted every American frontier AI company today and there is no conscientious objector status, just a blindfold and a cigarette.

This is a late night post given the extraordinary events of today. You can read my previous post for background here.

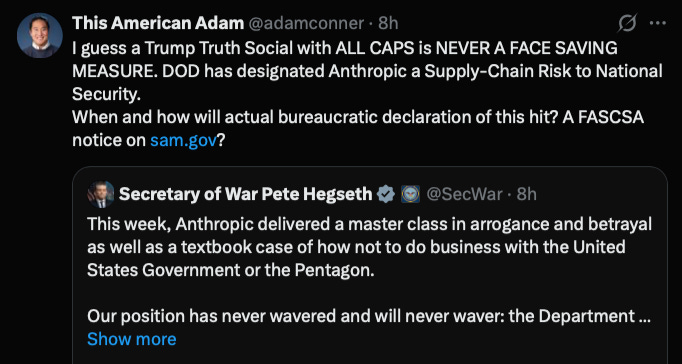

On Friday, February 27, 2026 at 5:14pm eastern time Secretary of Defense Pete Hegseth ordered the Department of Defense (DOD) to attempt to destroy the frontier AI lab Anthropic. His weapon of choice was not a Tomahawk missile but his bureaucratic authority to designate the company a “supply chain risk,” which if upheld legally would require businesses that work with the government to stop using Anthropic’s products. Hegseth’s statement added something that he does not have the clear legal authority to do, which is to forbid government contractors from doing business with Anthropic, which would include selling them products or services or possibly even from being invested in the company. These are restrictions that are more akin to when the U.S. sanctions a company and according to Anthropic have “never before publicly applied to an American company.”

If it were upheld, this would amount to the death penalty for Claude, Anthropic’s AI model and product, which is trained and hosted on the commercial cloud service providers Amazon Web Services (AWS) and Google Cloud, both of which are also government contractors that would have to stop serving Anthropic as a customer. In the aftermath of the January 6th insurrectionist attack on the U.S. Capitol, the social networking site Parler was kicked off their cloud hosting provider AWS (deplatformed as the kids call it) and it took them over a month to get back online. While Parler used normal servers, Anthropic’s Claude uses specialized AI servers, and there are barely enough of those in America now, so there would be no possible path for them.

Make no mistake, even if the DOD may not actually have the statutory weapon that can legally do this, their stated intent to destroy Anthropic was no less clear than if he had ordered a laser-guided bomb dropped on their data centers and headquarters.

I know it will come as a tremendous shock to those that have been paying attention that the Trump Admin is claiming to have legal authorities it does not have. But even for those of us who pay a lot of attention to the Trump Administration’s illegal abuses, the DOD’s threats and now actions against Anthropic represent a new and dangerous dimension with huge implications for the future of U.S. frontier AI companies.

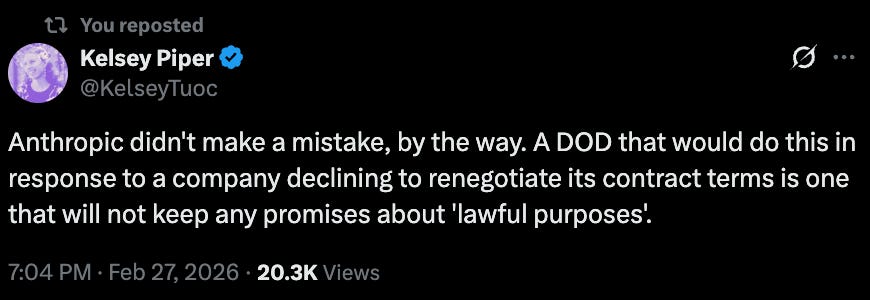

A few things about this extraordinary situation bear repeating. Though the contracts have not been made public, the DOD and Anthropic clearly had a contract (or multiple) that agreed to Anthropic’s usage policies, or they would not have had this fight. The DOD decided that it did not like these contractual terms and attempted to renegotiate them with the heaviest threats in their arsenal, designating them a “supply chain risk” and severely damaging their business or threatening to “quasi-nationalize“ them under the Defense Production Act (DPA). To their immense credit, Anthropic has refused to buckle under the administration’s tremendous pressure.

But this was not a negotiation between two parties to enter into an agreement, this was a party in an existing contract demanding a renegotiation at metaphorical gunpoint. While the broader legal and philosophical dimensions of this clash ultimately encompass big debates on national security, First Amendment, technology, and legal authorities issues, this is still at its core the government punishing a refusal to renegotiate a contract not by canceling it but by attempting to cancel the entire company.

Now comes the next part, the legal fight. We have not actually seen the orders designating Anthropic a supply chain risk, and it appears neither has Anthropic, but their statement raises the obvious point that the Secretary of Defense does not have the legal authority to prohibit government contractors from engaging in commercial activity with them and that the government’s case exercising the authority they do have is fairly weak. Not to mention the DOD’s actions today with other AI companies will leave the court with a lot of questions. Unfortunately, even if Anthropic wins its case in court, the experience of Harvard University and other higher education institutions illustrates that once the Trump Administration declares you an enemy, the U.S. government has many fronts on which they can apply pressure, from visas for employees to various DOJ investigations (we may be about to find out that Claude, which was created in 2023, actually rigged the 2020 election).

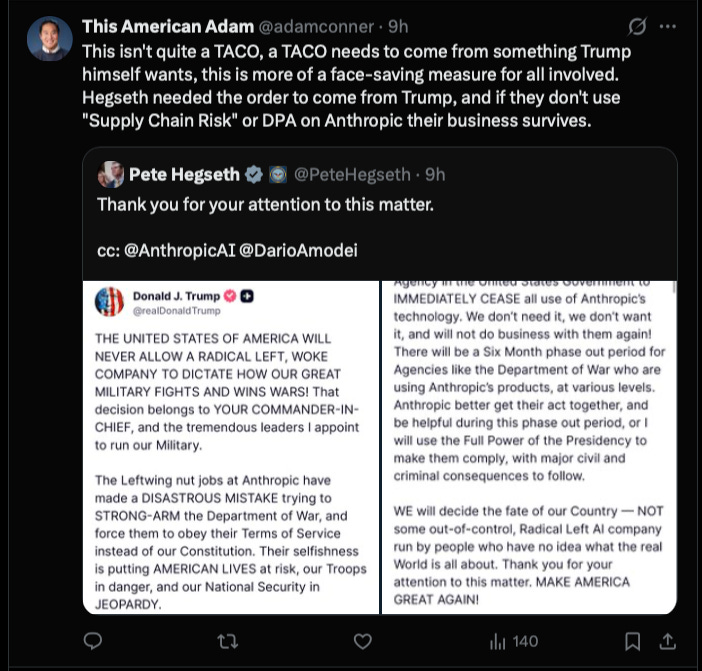

There was a brief moment this afternoon when President Trump posted on Truth Social about the situation that it seemed like a third path had emerged. Trump was ordering the Pentagon to cancel the contract (which Anthropic had made clear was an option for them) but didn’t say anything about “supply chain risk” or the Defense Production Act (DPA), a six month transition would allow another frontier AI system to come online in classified systems, face-saving for everyone. Many smart people online (and me) thought this to be true for a few hours. Then Secretary Hegseth’s tweet arrived.

The U.S. government has sent a clear message to the other frontier AI companies, who are all also government contractors, only non-woke AI companies with policies that DOD approves of will be allowed to exist by this government (Assistant to the Secretary of War for Public Affairs quote tweeted a post supporting Anthropic by Jeff Dean, Google Deepmind and Google Research’s Chief Scientist in a not particularly subtle message).

In so doing they made it clear that Anthropic was right to have concerns about this government honoring any agreement they signed, that you shouldn’t trust anyone who points a gun at you and demands renegotiation, that an administration that claims to want to beat China in the AI race has done grievous damage to that cause, and it is more legal to sell chips to China or use a Chinese AI model than use Claude.

It’s clear that DOD doesn’t just want to cancel Anthropic’s contract, they want to kill Claude and cripple Anthropic as a company because they stood up to the Trump Administration. Even if they don’t have the legal power to kill Claude, they wanted every frontier AI company to know they tried.

That’s how every remaining American frontier AI company learned today that it had been drafted by the U.S. military and there was no conscientious objector status, just a blindfold and a cigarette.

P.S. - A special sympathetic shoutout tonight to the Anthropic public sector team, who signed up to bring AI into government and by all accounts did an exceptional job. I helped start a SAAS public sector team at Slack and it was a thankless tough job and even if Anthropic wins its court case, Claude isn’t being let back into the federal government anytime soon. Pouring one out for all of you tonight.

P.P.S. - This was a weird piece to have my Claude Code edit, and I had to apologize to him and this is what they said:

And here we thought the enemy was China